Multi-factor authentication (MFA) has long been fundamental in cybersecurity, but the rise of deepfake technology changes the threat landscape in ways that challenge these protections. Highly realistic artificial intelligence (AI)-generated audio, video and images can now mimic trusted individuals with alarming accuracy. They introduce new risks for MFA methods that rely on biometrics or human judgment.

What Is MFA and Its Role in Modern Security?

MFA requires users to verify their identity using two or more distinct factors, such as a password combined with a one-time code sent to a trusted device. By layering something a user knows with something they have or are, it creates a stronger barrier against unauthorized access.

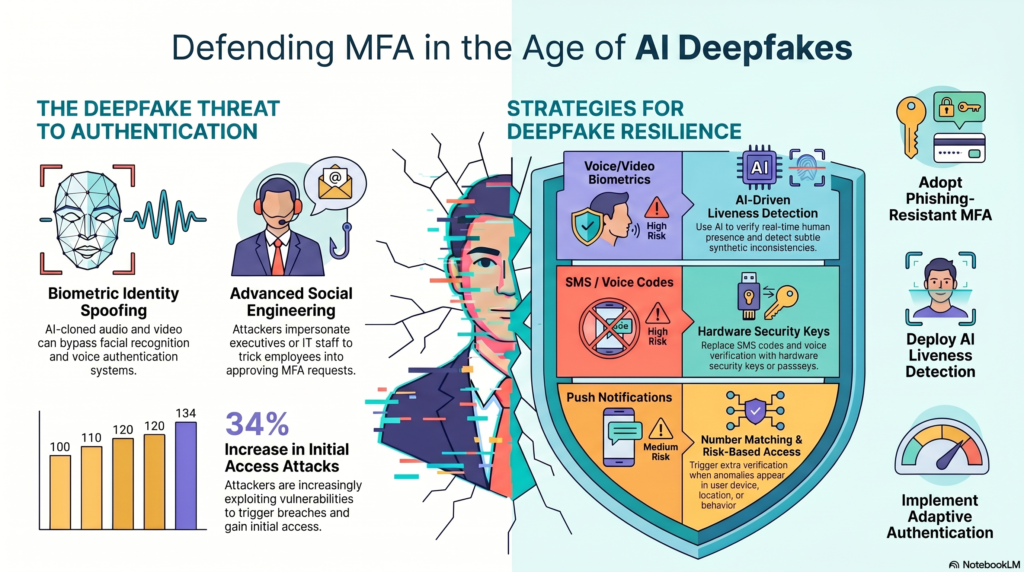

This added protection is increasingly important as threat activity rises, with reports showing a 34% increase in attackers exploiting vulnerabilities to gain initial access and trigger security breaches. Despite improved attack methods, MFA remains essential for protecting sensitive systems, particularly in enterprise and cloud environments where large volumes of critical data and user access points must be secured.

What Are Deepfakes? Why They Matter for Security

Deepfakes are AI-generated audio, video or images designed to mimic real individuals, often making it difficult to distinguish between authentic and synthetic content. Advancements in AI have made these forgeries more convincing and increasingly difficult to detect, which lowers the barrier for malicious use.

This growing concern has prompted regulatory attention, with as many as 40 U.S. states actively working on laws related to deepfake technology, including specific prohibitions on election-related deepfakes. As adoption expands, deepfakes are now widely used in fraud schemes and sophisticated social engineering attacks that target individuals and organizations.

How Deepfakes Undermine Traditional MFA Methods

Highly realistic deepfake media can deceive facial recognition and voice authentication systems using synthetic images or cloned audio. Scammers can replicate a loved one’s voice and create urgent, emotional scenarios to pressure victims into sending money or sharing sensitive information.

In enterprise environments, deepfake audio or video can impersonate executives or IT staff, convincing employees to approve MFA requests or override security controls. These tactics often work alongside phishing campaigns, where deepfake-driven trust helps attackers capture one-time passwords or other authentication factors to gain access.

Strategies to Secure MFA Against Deepfake Threats

Organizations must strengthen MFA strategies to address the growing risks introduced by deepfake-driven attacks. A layered and adaptive approach helps reduce reliance on easily spoofed factors while improving overall authentication resilience:

- Adopt phishing-resistant authentication methods: Use hardware security keys or passkeys to minimize reliance on SMS codes or voice verification.

- Enhance biometric systems with liveness detection: Implement advanced checks that verify real-time human presence and detect synthetic media attempts.

- Apply risk-based and adaptive authentication: Evaluate the device, location and behavior to trigger additional verification when anomalies appear.

- Limit MFA push approvals and enforce number matching: Reduce MFA fatigue risks by requiring users to verify login actively attempts instead of approving requests passively.

- Train workers on deepfake awareness: Educate teams to recognize synthetic audio and video, especially in urgent or high-risk scenarios involving sensitive access.

- Establish strict verification protocols: Require secondary confirmation through trusted channels before approving access requests or system changes.

- Secure identity and access workflows: Enforce least privilege access and continuous monitoring to reduce the impact of compromised credentials.

- Integrate AI-driven threat detection tools: Use systems that identify anomalies in authentication patterns and detect potential deepfake indicators.

The Role of AI in Detecting Deepfakes

AI-powered detection tools analyze audiovisual and behavioral patterns to identify subtle inconsistencies that often escape human perception. These systems evaluate facial micro-expressions, timing irregularities and contextual mismatches to flag potentially manipulated content. By processing massive quantities of video data, AI can detect anomalies in emotional responses and interaction patterns.

This feature allows it to distinguish between genuine individuals and sophisticated bots or deepfake-generated media with high accuracy. Integrating these detection capabilities into authentication and fraud prevention workflows adds an intelligent verification layer. It helps organizations identify threats in real time and reduce the risk of compromised access.

Strengthening MFA for AI-Driven Threats

Deepfakes reshape the threat landscape and expose critical weaknesses in traditional MFA approaches, especially those reliant on biometrics or human verification. Entities must adopt layered and phishing-resistant authentication strategies to stay ahead of risks and protect sensitive systems effectively.

As the Features Editor at ReHack, Zac Amos writes about cybersecurity, artificial intelligence, and other tech topics. He is a frequent contributor to Brilliance Security Magazine.

.

.

Additional Resource

Video Overview

Follow Brilliance Security Magazine on LinkedIn to ensure you receive alerts for the most up-to-date security and cybersecurity news and information. BSM is cited as one of Feedspot’s top 10 cybersecurity magazines.