In Episode S8E6 of the Brilliance Security Magazine Podcast, host Steven Bowcut speaks with Aviv Nahum, CEO and co-founder at Above, about one of the most consequential shifts underway in cybersecurity: the move from static, rule-based detection to AI-driven reasoning. Drawing on his background in elite military cyber work, startup leadership, and building AI systems for security use cases, Aviv explains why today’s most difficult cyber problems increasingly require context, interpretation, and judgment rather than simple alerting logic. The conversation focuses especially on insider threat, where human behavior, intent, and situational context make detection far more complex than traditional external threat models.

Summary

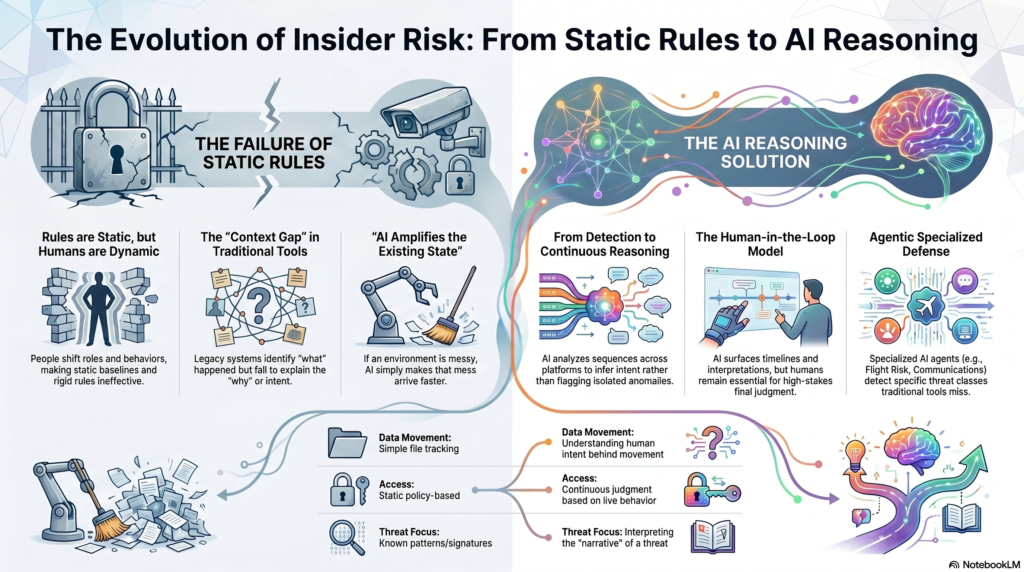

Steven and Aviv begin by exploring where AI is already creating genuine value in cybersecurity and where the industry still tends to overstate its capabilities. Aviv argues that the most important breakthrough is not merely better automation or faster pattern matching, but AI’s ability to reason across multiple signals and make sense of context in ways traditional machine learning and legacy rule-based systems could not. He explains that older approaches were built to detect predefined threats and known patterns, while modern environments involving cloud platforms, SaaS applications, identity systems, and AI tools are far too dynamic for that approach to hold up on its own. In his view, the real promise of AI is its capacity to move security from predefined logic to continuous reasoning.

The discussion then turns to the balance between attackers and defenders in the age of AI. Aviv pushes back on the most alarmist narratives, describing AI primarily as a force multiplier rather than an entirely new category of threat. He notes that attackers can certainly use it to scale reconnaissance, experimentation, and execution more rapidly, but defenders can also use it to process more data, analyze faster, and respond more efficiently. At the same time, he cautions against assuming that AI can already replace human decision-makers or fully automate sophisticated security operations. Systems that appear confident without truly understanding context can be dangerous, especially in cybersecurity, where liability, judgment, and consequences still matter.

A major theme of the episode is insider threat and why it remains such a stubborn challenge for security teams. Aviv explains that most security technologies are built around software-based environments that are relatively predictable and can be mapped, modeled, and controlled. Humans are different. People shift roles, adapt to pressure, behave differently depending on circumstances, and cannot be reduced to static baselines or simple rules for long. That is why, he says, insider risk is fundamentally a reasoning problem rather than a rules problem. Instead of asking only whether a user performed a suspicious action, effective security systems now need to ask why that action happened in that moment, under those conditions, and in that broader context.

Aviv goes on to explain that AI is especially useful when it helps teams infer intent from sequences of behavior rather than isolated signals. In the old model, an alert might simply say that a user downloaded a large volume of data or moved files to a certain location. But that alone does not explain whether the action reflects normal work, negligence, curiosity, or malicious intent. Aviv and Steven discuss how context changes everything: who the employee is, what kind of files are involved, where the data is going, what deadlines or pressures may exist, and whether there are other warning signs that make the behavior more or less concerning. Aviv uses the analogy of a soup: the more ingredients an investigator has, the better both human and AI judgment become.

The conversation also examines where human judgment must remain in the loop. Aviv says humans are still essential wherever ambiguity is high, consequences are serious, and multiple interpretations may be valid. In those situations, AI may serve as a copilot that surfaces timelines, explanations, and likely interpretations, but final decisions still need human oversight. That is particularly true in insider risk, where incomplete context can lead to unfair conclusions if a machine acts too quickly or too autonomously. He suggests that the boundary between human and machine judgment may shift over time, but as of now, responsible use of AI in security still depends heavily on expert review.

Later in the episode, Steven asks how security leaders should adopt AI responsibly. Aviv’s answer is not to start with governance language alone, but with operational clarity. He argues that many organizations fail before they ever reach the ethics stage because they deploy AI without first understanding the exact problem they are trying to solve. He warns against layering AI on top of fragmented workflows, unclear ownership, noisy alerts, and broken systems, since doing so often produces faster noise, more confident wrong answers, and even harder-to-debug environments. In his view, responsible adoption begins with restraint, a clear use case, and a disciplined focus on genuine bottlenecks.

Aviv also shares how he thinks about building AI products that solve real security problems instead of simply sounding impressive. He says the clearest validation comes when a system reduces manual workload, shortens investigation time, and reveals meaningful true positives that teams would otherwise have missed. In the insider threat space, he found value by replacing raw alerts with fuller investigations: timelines, explanations, and reasoning that help analysts act instead of manually stitching together logs. For Aviv, one of the strongest signs that a product is addressing a real problem is fast feedback from customers and rapid time to value.

The episode closes with a broader look at how AI is changing cybersecurity roles. Aviv suggests that, much like AI has turned many writers into editors, it is shifting security professionals from producing outputs to evaluating them. Analysts, engineers, and investigators increasingly review, refine, and judge AI-generated findings rather than building every conclusion from scratch. Even so, he emphasizes that the fundamentals still matter most. Security leaders should focus on visibility, identity hygiene, access control, and reducing operational noise before expecting AI to improve outcomes. As he puts it, AI amplifies whatever state an organization is already in. If the environment is strong, AI can make it stronger. If it is messy, AI can make that mess arrive faster and louder.

About Our Guest

Aviv Nahum is a founder and technologist focused on applying AI to real-world cybersecurity problems. His background includes service in an elite Israeli Military Intelligence cyber unit, which helped shape his perspective on the intersection of cybersecurity and artificial intelligence. Previously, he was CTO and co-founder of Ctrl, which was acquired by Sana AI, now part of Workday. Aviv is currently the CEO and co-founder of Above Security, where he is focused on AI-driven prevention and detection of insider threats.

Click the image below to listen to the full episode.

Additional Resouces

Video Overview

Infographic

Steven Bowcut is the Editor-in-Chief of Brilliance Security Magazine and host of the BSM Podcast, where he speaks with cybersecurity leaders, innovators, and practitioners about the ideas, technologies, and strategies shaping the future of cyber defense.